The astronomical growth in computer data along with the migration to cloud computing technologies has contributed to the proliferation of large data centers. These facilities consist of big buildings housing thousands of computer servers. Extensive power and cooling systems are required to run the facilities. The largest facilities process data for the likes of Google, Facebook and eBay.

Historically, much of this data processing has been accomplished locally in corporate IT facilities. In recent years, many companies have outsourced IT infrastructure and software applications to cloud based service providers that run large data centers. The benefits to these companies include lower costs, increased flexibility and enhanced processing power.

The number and size of these data centers will continue to grow at an astounding pace. Until now, this growth has been fueled by the aforementioned internet services along with the popularity of cloud based enterprise software products from companies like Salesforce, NetSuite and Workday. Now, large enterprise software providers such as Oracle and SAP are embracing cloud based solutions as well.

Energy Consumption

Not surprisingly, these data centers consume enormous amounts of energy. According to Jonathon Koomey of Stanford University, U.S. datacenters used about 76 billion kilowatt-hours or about 2% of U.S. electricity in 2010. Globally, the figure is estimated to be about 1.3%.

This consumption has raised eyebrows and drawn scrutiny in the public arena. Much of the criticism surrounds the public’s insatiable demand for computing power and the sheer size of the facilities rather than their energy efficiency. The reality is that by their very nature, these large centralized facilities are much more efficient than smaller, local facilities. A good analogy would be to compare the energy consumption of a bus to a car. The bus consumes more energy, but uses much less per passenger than the car.

Fortunately, large service providers have heard the message loud and clear and are racing to increase energy efficiency at their data centers. These improvements are motivated by cost savings, corporate image and a genuine desire to save the planet.

Most energy consumption at these facilities can be categorized as either facility related or computing related.

- Facility related – This includes the energy required to support the facility itself. This consists primarily of cooling, ventilation and power management. New state of the art facilities use a variety of methods to improve efficiency. Site selection to take advantage of the local climate along with the proximity to efficient power is the first concern. For example, Google’s most recent facility in Hamina, Finland is located in a former paper mill next to the Gulf of Finland. The location enables this data center to be cooled entirely by sea water. To reduce the environmental impact, this water is conditioned before it is returned to the sea. Service providers have also found that they can safely run the facilities at hotter temperatures than previously believed.

- Computing related – This includes the energy required to actually run the servers and related IT equipment within the facility. One of the biggest improvements in this area has been the development of virtualization technologies. These allow companies to combine many “servers” onto one physical box. Replacing inefficient servers, powering down idle servers and efficient load balancing have also contributed to better energy efficiency in computing.

The industry uses a measure called Power Usage Effectiveness (PUE) to calculate data center energy efficiency. This measure reflects the total energy consumption of the complex (including both facility and computing related energy) divided by the energy consumption of the IT equipment alone.

A facility with a PUE of 2.0 would consume 2 KWH of total energy for each KWH consumed by the IT equipment alone. A perfect rating of 1.0 would imply that ALL of the energy consumption at the facility was consumed by the IT equipment.

In 2011, the Uptime Institute surveyed 500 large data centers and estimated that the average PUE was about 1.8, down from 2.5 in prior estimates. While estimates from other organizations vary, it’s clear that the average is falling. Recent mega-facilities at eBay, Google and Facebook have ratings of only 1.35, 1.14 and 1.07 respectively.

To be sure, the PUE is not a perfect measure. In fact, a substantial drop in the IT portion of energy consumption could actually result in a higher PUE calculation! Regardless, this measure has proven to be a valuable benchmark in measuring the energy efficiency of large data centers. In fact, in the race for sustainability, there seems to be some competition between large service providers to reduce PUE’s.

Future Developments

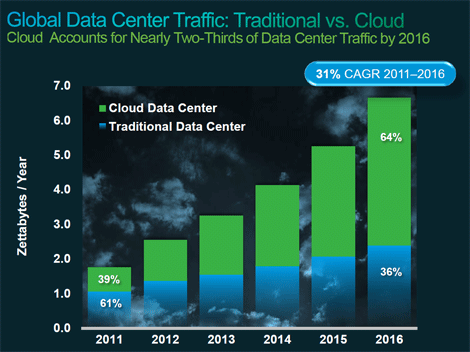

Cisco Systems projects that global data center traffic will grow 300% to 6.6 zettabytes by 2016. A zettabyte is equal to one trillion gigabytes! By 2016, the cloud is expected to make up 64% of that volume.

Data center innovation will need to accelerate to accommodate this growth and the race for sustainability will inevitably continue. Here are a few recent trends:

- Industry Collaboration & Certification – In 2011, Facebook established the Open Compute Project. OCP’s goal is to openly share custom data center designs to improve efficiency across the industry. Google, historically very secretive about their data centers, has begun to share more information with the public. The British Computer Society has launched a new Certified Energy Efficiency Datacenter Award (CEEDA). This certification is similar to the popular LEED certification and recognizes exceptional achievements in green data center design.

- Education – Higher education has acknowledged the need for well-educated individuals to run these data centers sustainably. You can now get an A in Green Data Center Management at Metropolitan Community College in Omaha. California State University at Fullerton also offers a certificate in Green Data Center Management. Other institutions are sure to follow.

- Technological Improvements – Of course, improvements in server technology and facilities management will continue at a blistering pace. One newer development is the use of modular systems which allow providers to more efficiently size the facility to meet current demand. Virtualization has only just begun and will generate even more savings in the coming years.

- Energy Sourcing – Some of these service providers have committed to purchase the energy used at their facilities from sustainable sources such as solar, wind, hydroelectric and geothermal. Improvements in these technologies along with increased availability of renewable energy will further reduce the carbon footprint of data centers.

Large service providers are stepping up to the plate in a big way to provide clean computing power to the world. This should help society to absorb the expected increases in data processing demand without undo harm to the environment. More power to them. Actually….make that LESS power!

Thomas Parent blogs for Rackspace Hosting. Rackspace Hosting is the service leader in cloud computing, and a founder of OpenStack, an open source cloud operating system. The San Antonio-based company provides Fanatical Support to its customers and partners, across a portfolio of IT services, including Managed Hosting and Cloud Computing.